Azure AI Foundry pricing 2026 works on a token-based billing model where every model call is charged separately for input tokens and output tokens, with rates that vary significantly across the model catalogue. Microsoft’s official pricing calculator covers the token portion accurately but leaves out Azure AI Search, fine-tuned model hosting, monitoring infrastructure, and support costs that consistently push real deployment bills 15% to 40% above the token estimate. This guide covers the full picture: model rate cards, deployment options, hidden cost categories, real-world scenario estimates, and spend controls every enterprise deployment needs before going live.

One clarification worth making upfront: Azure AI Studio no longer exists as a separate product. Microsoft rebranded and consolidated it into Azure AI Foundry in late 2024, combining the model catalogue, Azure OpenAI Service, and the development tooling into one unified platform. References to “Azure AI Studio” in older documentation refer to the same service. All pricing in this post reflects Global Standard pay-as-you-go rates as of May 2026. Always verify current figures on the official Azure OpenAI Service pricing page before finalising a budget.

Understanding Tokens: The Foundation of Azure AI Foundry Pricing 2026

Every Azure AI Foundry cost calculation starts with the token. Models do not process words or sentences. They process tokens, which are subword units the model uses internally to represent text. The practical conversion in 2026: 1,000 tokens equals approximately 750 words, or roughly one page of standard business prose. A short email is around 150 tokens. A five-page document runs to 2,000 to 2,500 tokens. A RAG prompt that includes system instructions, retrieved document chunks, and conversation history can reach 6,000 to 10,000 tokens before a user submits a single query.

Azure AI Foundry bills input tokens and output tokens at different rates, and the gap between them has a major effect on production costs.

- Input tokens cover everything sent to the model: the system prompt, the user’s question, retrieved knowledge base chunks, and any conversation history passed in the request. These are directly controllable through prompt design and retrieval architecture.

- Output tokens are what the model generates in response. Output tokens cost 4x to 8x more than input tokens depending on the model, because the model generates each output token sequentially rather than processing input in parallel. Verbose response instructions drive up output costs directly.

Azure AI Foundry Pricing 2026: The Complete Model Rate Card

The table below covers the models most commonly deployed in Azure AI Foundry production environments in 2026. All figures are Global Standard pay-as-you-go, priced per one million tokens. Regional and Data Zone deployments carry a premium for data residency. Verify current rates on the Azure AI Foundry Models pricing page before building a budget.

| Model | Provider | Input per 1M Tokens | Output per 1M Tokens | Context Window | Best Use Case |

|---|---|---|---|---|---|

| GPT-4o | OpenAI via Foundry | $2.50 | $10.00 | 128K | Complex reasoning, agents, multimodal |

| GPT-4o mini | OpenAI via Foundry | $0.15 | $0.60 | 128K | High-volume, cost-sensitive workloads |

| GPT-4.1 | OpenAI via Foundry | $2.00 | $8.00 | 1M | Long-document chains, agentic planning |

| Llama 3.3 70B | Meta via Azure Marketplace | ~$0.59* | ~$0.79* | 128K | Open-source alternative, data sovereignty |

| Mistral Large 3 | Mistral via Azure Marketplace | $0.50 | $1.50 | 128K | Multilingual, instruction-following tasks |

| Phi-4-mini | Microsoft via Foundry | ~$0.07* | ~$0.23* | 128K | Classification, extraction, routing, SLM tasks |

GPT-4o Token Pricing on Azure: What the Rate Card Does Not Show

GPT-4o token pricing on Azure at $2.50 input and $10.00 output per million tokens is the most widely referenced figure in enterprise AI budgets, and also the most commonly misapplied one. The headline input price is only meaningful if a workload is almost entirely input-heavy. Most production deployments are not.

A customer service chatbot sending a 500-token system prompt plus a 100-token user message and receiving a 400-token response runs a 60/40 input-to-output split. The blended effective rate for that workload is approximately $5.50 per million tokens, more than double the advertised input rate. Budget calculations built on the input price alone will underestimate real inference costs significantly.

Three additional factors shift the actual GPT-4o token cost on Azure beyond the base rate:

- Context window utilisation. GPT-4o supports a 128K context window. RAG pipelines injecting multiple retrieved document chunks per request frequently push input token counts to 8,000 to 20,000 per call. At that scale, input costs per call rival output costs per call.

- Prompt caching. Azure AI Foundry supports prompt caching for repeated static prefixes. System prompts and few-shot examples that appear on every call are eligible for reduced rates on cached segments. High-frequency deployments with long system prompts benefit materially from caching.

- Deployment region. Global Standard carries the lowest rates. Standard and Data Zone deployments, required for workloads with regional data residency obligations, carry a pricing uplift that should be factored into any compliance-driven architecture.

GPT-4.1 is worth noting alongside GPT-4o. At $2.00 input and $8.00 output per million tokens it is marginally cheaper and offers a 1 million token context window. For workloads involving very long documents, extended agent reasoning chains, or complex multi-turn conversations, GPT-4.1’s context capacity becomes a meaningful architectural advantage at a slightly lower cost point.

Azure Phi-4 Pricing 2026: The Small Language Model Opportunity

Azure Phi-4 pricing in 2026 makes Microsoft’s small language model the strongest cost efficiency argument in the Foundry catalogue. Phi-4-mini is priced at approximately $0.07 per million input tokens and $0.23 per million output tokens via Serverless API. That is a 35x to 40x reduction in cost versus GPT-4o for the same token volume.

On a workload processing 50 million tokens per month, the difference between GPT-4o and Phi-4-mini is $5,000 versus $125 in token costs. That gap scales linearly with volume. Azure Phi-4 pricing in 2026 makes a tiered model architecture, where simpler tasks route to Phi-4-mini and complex tasks escalate to GPT-4o, one of the highest-return optimisations available to enterprise teams building on Azure AI Foundry.

Phi-4-mini performs reliably in production for these task categories:

- Document classification and routing. Sorting incoming invoices, contracts, support tickets, or forms into processing categories. Binary and multi-class classification tasks where GPT-4o capability is unnecessary.

- Structured field extraction. Pulling defined fields from standardised form layouts. Accurate and cost-effective when document structures are consistent.

- Intent detection. The first processing layer in a multi-model pipeline, determining what a user is asking before routing to a more capable model for complex responses.

- Short-form generation. Confirmation messages, status summaries, and templated notifications where output length is constrained and quality requirements are defined.

One important deployment note on Azure Phi-4 pricing: when Phi-4-mini is deployed on a Managed Compute endpoint rather than the Serverless API, billing changes to an hourly VM rate that applies continuously regardless of query volume. For most enterprise use cases below 100 million tokens per day on that model alone, the Serverless API path delivers a lower total cost.

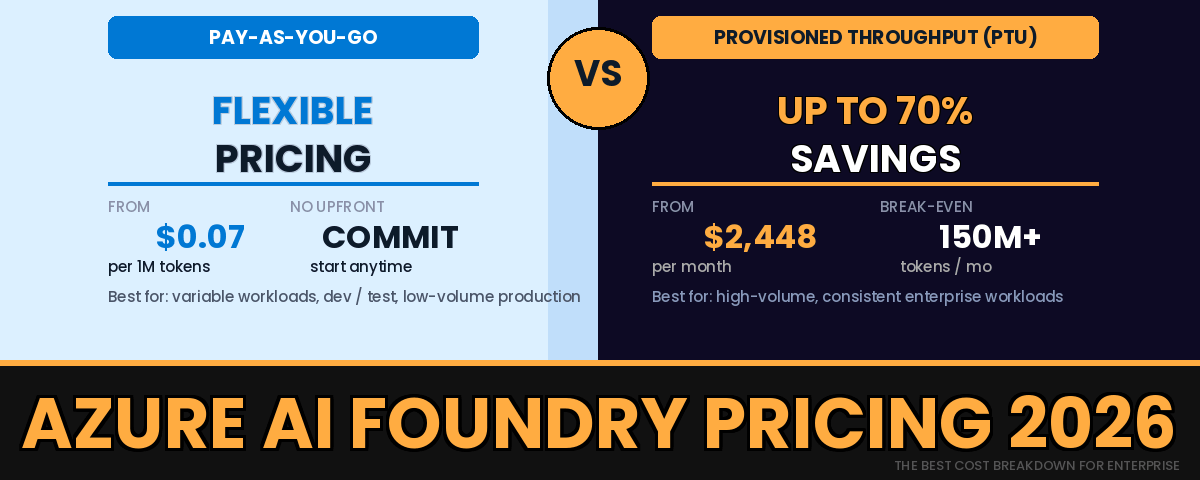

Pay-as-you-go vs Provisioned Throughput in Azure AI Foundry

Beyond the per-token rate card, Azure AI Foundry pricing 2026 is structured around two deployment billing models that apply to all models in the catalogue. Choosing the right one for each workload has a larger effect on total cost than model selection in most high-volume scenarios.

Pay-as-you-go

Pay-as-you-go (PAYG) charges per token consumed with no upfront commitment. Global Standard deployment routes requests across Microsoft’s worldwide infrastructure for maximum availability and carries the lowest advertised rates. Data Zone and Regional deployments restrict traffic to defined geographic boundaries for compliance requirements and carry a rate premium. PAYG is the correct default for variable workloads, development and testing environments, and any deployment where monthly volume is below the PTU break-even threshold.

Provisioned Throughput Units (PTUs)

Provisioned Throughput Units are reserved model capacity billed at a fixed hourly rate regardless of actual usage. PTUs start at approximately $2,448 per month and can deliver up to 70% savings over PAYG rates at sustained high volumes. Global and Data Zone PTU deployments require a minimum commitment of 15 PTUs. Regional deployments require 25 to 50 PTUs.

The break-even point for GPT-4o sits at roughly 150 to 200 million tokens per month. Below that threshold, PAYG is cheaper and more flexible. Above it, PTUs deliver meaningful savings but only if token volume is consistent. A single prompt redesign can double token consumption. A product launch can multiply inference volume tenfold in days. PTU commitments should be based on three or more months of stable production data, not projected growth estimates.

Hidden Costs That Inflate Your Azure AI Foundry Bill

Enterprise Azure AI Foundry deployments consistently run 15% to 40% above token cost estimates. The gap comes from four cost categories that Microsoft’s pricing calculator does not include.

Fine-Tuned Model Endpoint Hosting

Fine-tuning a model on custom data is relatively inexpensive. Running a fine-tuning job on GPT-4o mini typically costs $5 to $10 USD for a few hundred training examples. The expensive part is keeping the resulting model available as a deployed endpoint. A fine-tuned GPT-4o deployment can cost $50 to $70 USD per day in hosting fees regardless of whether it receives a single query. Deployments created for evaluation purposes and not subsequently decommissioned accumulate thousands of dollars in charges over weeks. Every fine-tuned model deployment should carry an owner tag and an automated shutdown policy from day one.

Azure AI Search for RAG Pipelines

Any RAG-based application on Azure AI Foundry requires a vector index, and that index lives in Azure AI Search. The Basic tier at $75 per month covers small document collections. Standard S1 at $250 per month is the realistic starting point for enterprise RAG deployments. Organisations that provision Standard S2 or S3 upfront for anticipated scale frequently find that search infrastructure costs exceed model inference costs in the first twelve months. Start at the lowest tier that meets current document volume and query throughput requirements, then scale up when usage data justifies the move.

Blob Storage for Grounding Data

Azure Blob Storage at $0.02 per GB per month is not a significant budget line for most deployments. It becomes relevant when document corpora are large, when audio or video files are involved in multimodal workflows, or when processed outputs are retained for compliance audit purposes. Budget it separately from token costs rather than treating storage as free.

Infrastructure and Support Overhead

A production Azure AI Foundry deployment requires Application Insights, Azure Monitor, Key Vault for secrets management, and a Standard support plan for SLA coverage. These components add approximately $35 to $50 per month per environment. The cost is not large individually but does not appear in Microsoft’s token calculator and compounds across development, staging, and production environments.

Azure AI Foundry Cost Calculator: 3 Real-World Scenarios

The Azure Pricing Calculator is a useful starting point for token cost estimation but produces incomplete numbers for production budget planning. The following Azure AI Foundry cost calculator scenarios model the full infrastructure stack so the output figures are suitable for finance and procurement conversations. Each scenario uses a 60/40 input-to-output token ratio as a baseline. Workloads with longer tool-call chains or extended context will skew more input-heavy.

Scenario A: Internal Helpdesk Chatbot (5,000 Messages per Month)

| Cost Component | Assumption | GPT-4o mini | GPT-4o |

|---|---|---|---|

| Input tokens | 400 tokens per message = 2M tokens | $0.30 | $5.00 |

| Output tokens | 200 tokens per message = 1M tokens | $0.60 | $10.00 |

| Infrastructure overhead | Monitoring, retries, support | ~$40 | ~$40 |

| Estimated monthly total | ~$41 | ~$55 |

At this volume, model selection has almost no impact on the total bill. Reducing system prompt length from 800 tokens to 300 tokens delivers more cost benefit than switching models.

Scenario B: Bulk Document Summarisation (10,000 PDFs per Month)

| Cost Component | Assumption | GPT-4o mini | GPT-4o |

|---|---|---|---|

| Input tokens | 5 pages x 300 tokens x 10,000 = 15M | $2.25 | $37.50 |

| Output tokens | 300-token summary x 10,000 = 3M | $1.80 | $30.00 |

| Azure Blob Storage | 50GB of PDFs at $0.02 per GB | $1.00 | $1.00 |

| Infrastructure overhead | Monitoring, retries | ~$40 | ~$40 |

| Estimated monthly total | ~$45 | ~$109 |

The $64 per month difference at 10,000 PDFs becomes $640 at 100,000. Running a quality benchmark across 200 representative documents before choosing the cheaper model is the standard validation step for this workload type.

Scenario C: Enterprise Knowledge Search with RAG (100 Users, 10 Queries per Day)

| Cost Component | Assumption | GPT-4o mini | GPT-4o |

|---|---|---|---|

| Input tokens | 1,000 tokens per query x 30,000 = 30M | $4.50 | $75.00 |

| Output tokens | 500 tokens per query x 30,000 = 15M | $9.00 | $150.00 |

| Azure AI Search Standard S1 | Enterprise index tier | $250.00 | $250.00 |

| Azure Blob Storage | 200GB document corpus | $4.00 | $4.00 |

| Infrastructure overhead | Monitoring, support plan | ~$50 | ~$50 |

| Estimated monthly total | ~$318 | ~$529 |

Enterprise search is where budget underestimation is most common. When GPT-4o mini is the inference model, Azure AI Search costs more than the model itself. Cost optimisation for this scenario starts by validating whether Standard S1 is necessary for the actual document volume and query throughput, not by switching models.

How to Limit Azure AI Spend Across Azure AI Foundry Deployments

Knowing how to limit Azure AI spend is an architecture and governance decision that must be made before the first production deployment, not after the first unexpectedly large invoice. Four controls belong in every Azure AI Foundry deployment checklist.

Set Budget Alerts in Azure Cost Management

Scope a budget to the resource group containing the AI Foundry deployment. Set alerts at 50%, 80%, and 100% of the monthly target. Configure alert notifications to a shared distribution list, not to an individual engineer’s inbox. For organisations running AI Foundry across multiple teams, create per-resource-group budgets rather than a single subscription-level view. Per-team attribution from the start makes cost reporting and chargeback significantly more straightforward.

Set Token-per-Minute Limits on Every Deployment

Every model deployment in the Azure AI Foundry portal exposes a configurable tokens-per-minute (TPM) limit. Without a TPM cap in place, a poorly constructed retry loop in application code or an agent tool-call cycle that does not terminate correctly can exhaust a monthly token budget within hours. Set conservative limits during development and testing, then increase limits incrementally based on load testing results rather than estimates.

Tag Every Deployment at Creation Time

Apply a minimum of three resource tags to every AI Foundry deployment before it goes live: the owning team, the application name, and the environment (development, staging, production). Without tags, Azure Cost Management reports a single aggregated spend line with no decomposition by workload or team. Cost attribution, chargeback, and anomaly detection all depend on consistent tagging applied from the start. Enforce tag requirements through Azure Policy rather than relying on individual teams to apply them voluntarily.

Validate Model Selection Before Scaling

The most consistent source of avoidable cost in Azure AI Foundry deployments is defaulting to GPT-4o for all tasks regardless of complexity. Before any workload scales past a few hundred users or documents per day, run a structured accuracy benchmark across 200 to 500 representative real-world inputs using a lower-cost model such as GPT-4o mini or Phi-4-mini. Score the results against the defined acceptance criteria. Two to three days of validation work regularly justifies a model switch that reduces monthly production costs by thousands of dollars.

Related Reading on wrvishnu.com

- Copilot and Azure AI Articles

- Power Platform Architecture Articles

- Power Platform Inventory API vs CoE Toolkit

What is azure ai foundry pricing 2026?

Azure AI Foundry pricing 2026 is structured as a token-based pay-as-you-go model where input tokens and output tokens are billed separately at rates that vary by model. GPT-4o costs $2.50 per million input tokens and $10.00 per million output tokens. GPT-4o mini is priced at $0.15 input and $0.60 output per million tokens. Phi-4-mini, the most affordable option, sits at approximately $0.07 input and $0.23 output per million tokens. Production deployments also incur costs for Azure AI Search, Blob Storage, monitoring infrastructure, and support plans, which typically add 15% to 40% above the raw token bill. Always verify current rates on the official Azure AI Foundry pricing page before finalising a budget.

What is GPT-4o token pricing on Azure?

GPT-4o token pricing on Azure AI Foundry under Global Standard pay-as-you-go is $2.50 per million input tokens and $10.00 per million output tokens as of 2026. Regional and Data Zone deployments carry a premium above those rates for data residency compliance. The effective blended rate for a typical production workload with a 60/40 input-to-output token split is approximately $5.50 per million tokens, not the headline input figure. Context window size, prompt caching eligibility, and deployment region all affect the final per-token cost.

What is azure phi-4 pricing 2026?

Azure Phi-4-mini pricing in 2026 is approximately $0.07 per million input tokens and $0.23 per million output tokens under Serverless API pay-as-you-go billing. This makes Phi-4-mini 35 to 40 times cheaper than GPT-4o for the same token volume. Phi-4-mini is suited to classification, structured data extraction, intent routing, and short-form generation tasks. Deploying Phi-4-mini on a Managed Compute endpoint instead of the Serverless API switches billing to an hourly VM rate that runs continuously regardless of query volume. The Serverless API path is cheaper for most enterprise use cases below 100 million tokens per day on that model.

Is there a free azure ai foundry cost calculator?

Microsoft provides a free Azure Pricing Calculator at azure.microsoft.com/pricing/calculator that estimates token costs for Azure AI Foundry model deployments. The limitation is that the calculator covers inference token costs only and does not account for Azure AI Search, Blob Storage, fine-tuned model hosting, monitoring infrastructure, or support plan costs. Enterprise deployments consistently run 15% to 40% above the calculator estimate once the full infrastructure stack is in place. The three scenario tables in this guide model the complete cost for a small chatbot, bulk document processing, and enterprise search to provide more realistic monthly estimates.

How do you limit azure ai spend on Azure AI Foundry?

The four most effective controls for limiting Azure AI spend on Azure AI Foundry are: configuring budget alerts at 50%, 80%, and 100% thresholds in Azure Cost Management and routing them to a shared team inbox; setting tokens-per-minute limits on every model deployment to prevent runaway agent loops or retry policies from exhausting the budget in a short period; tagging every deployment with the owning team, application name, and environment before it goes live to enable per-team cost attribution; and running a model quality benchmark before scaling to determine whether GPT-4o mini or Phi-4-mini can replace GPT-4o for the workload in question.

What is the difference between pay-as-you-go and Provisioned Throughput Units in Azure AI Foundry?

Pay-as-you-go charges per token consumed with no upfront commitment and suits variable or low-volume workloads. Provisioned Throughput Units (PTUs) are reserved capacity billed at a fixed hourly rate regardless of actual usage, starting at approximately $2,448 per month. PTUs deliver up to 70% savings over pay-as-you-go rates and remove rate-limit variability at peak load. The break-even for GPT-4o is approximately 150 to 200 million tokens per month. Below that threshold, pay-as-you-go is more cost-effective. Above it, PTUs make commercial sense for consistent high-volume production workloads with at least three months of stable usage data to support the commitment.

Is Azure AI Foundry free?

Azure AI Foundry does not have a dedicated free tier for production use. New Azure accounts receive $200 in credit valid for 30 days, which can be applied to Foundry service consumption. The Foundry playground and model evaluation tools are accessible at no charge, but inference calls that consume tokens are billed at the standard pay-as-you-go rates from the first token. No ongoing free allowance is available once the initial credit period expires.

How does Llama pricing on Azure compare to GPT-4o?

Llama 3.3 70B on Azure AI Foundry via the Serverless API is priced at approximately $0.59 per million input tokens and $0.79 per million output tokens through Azure Marketplace. GPT-4o costs $2.50 per million input tokens and $10.00 per million output tokens. Llama offers a meaningful cost reduction for high-volume workloads where the model meets the quality bar. Llama charges pass through Meta as the provider via Azure Marketplace and may not count toward Microsoft Azure Consumption Commitment balances in the same way as Microsoft-native service charges. Confirm the MACC treatment with your Microsoft account team before committing to Llama for large-scale workloads.

All pricing figures reflect May 2026 Global Standard pay-as-you-go rates. Microsoft updates these with each major model release. Verify current rates at the Azure OpenAI Service pricing page and the Azure AI Foundry Models pricing page before committing to a production budget.

Next Step

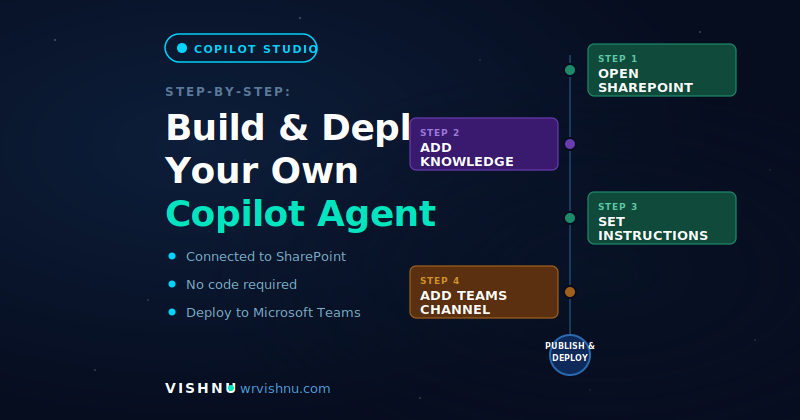

Ready to build? See the guide on Azure AI Foundry vs Copilot Studio to decide which platform fits your agent architecture before you commit to a deployment model.